AI Agent Threats: What Businesses Actually Need to Worry About

Domenico Lorenti

Cloud Architect

Anthropic’s August 2025 threat report documented something new. A sophisticated cybercriminal used Claude Code, an AI coding agent, to orchestrate data theft and extortion across 17 organizations, including healthcare providers and government agencies. The attacker demanded ransoms exceeding $500,000. In the same report, North Korean operatives used Claude to create convincing fake identities and land remote engineering jobs at Fortune 500 companies.

These are not theoretical risks. AI agents are already being weaponized. But they are also not replacing the threat that has dominated the internet for years: bots. The real danger is not one or the other. It is both, and increasingly, it is AI making bots more capable than they have ever been.

What AI agents actually are (and are not)

The term “AI agent” gets thrown around loosely. Customer service chatbots, recommendation engines, and web scrapers all get called agents. For this article, we are talking about something specific: LLM-based systems that reason, plan, and execute multi-step tasks autonomously.

The major consumer-facing agents include:

- ChatGPT Atlas (OpenAI): A full AI-powered browser that can navigate websites, fill forms, and interact with web applications using computer vision. Launched October 2025.

- Anthropic Computer Use: An API that lets Claude control a user’s actual browser, clicking, typing, and scrolling through real web pages.

- Google Gemini agents: Deep Research and other agentic tools that browse, synthesize, and act on information across the web.

These agents are designed to perform tasks like booking flights, comparing products, filling out applications, and making purchases on behalf of the user. They see what is on the screen, decide what to click, and carry out multi-step workflows without human intervention for each step.

This is fundamentally different from a bot. A bot follows a script. An agent reasons about what to do next.

The real AI agent risks today

Prompt injection: the unsolvable vulnerability

Every web page an AI agent visits is a potential attack surface. Prompt injection embeds malicious instructions in content that the agent reads and processes. The instructions are invisible to humans but readable by the AI.

The results can be severe. In a documented demonstration, a researcher planted a malicious email containing instructions to send a resignation letter to the user’s boss. When the user asked Atlas to draft an out-of-office reply, the agent sent the resignation instead.

OpenAI’s head of preparedness has stated that prompt injection attacks on browser agents are “unlikely to ever be fully solved.” The UK’s National Cyber Security Centre issued a similar warning. The fundamental problem is that agents cannot reliably distinguish between trusted user instructions and malicious content embedded in the pages they browse.

LayerX discovered the first major Atlas vulnerability in October 2025, days after launch: attackers could inject malicious instructions into ChatGPT’s persistent memory. Once poisoned, the memory carried those instructions into future sessions. The same researchers found that Atlas users are up to 90% more vulnerable to phishing attacks compared to users of traditional browsers like Chrome or Edge.

AI-powered credential attacks at scale

The more immediate threat is not agents committing fraud directly. It is agents automating the reconnaissance and testing phases that make fraud faster.

Traditional credential stuffing requires custom tooling for each target site. Every login page has different field names, CAPTCHA implementations, and error messages. Attackers historically focused on a shortlist of high-value targets because building custom scrapers for each one took time and skill.

AI agents change that math. Push Security researchers demonstrated that OpenAI’s Operator could identify which companies have active accounts on a list of SaaS apps, then attempt logins with provided credentials, all without writing a single line of custom code. The agent navigates each login page visually, just as a human would.

Gartner predicts that by 2027, AI agents will reduce the time to exploit exposed accounts by 50%. The technology effectively gives attackers a fleet of autonomous workers who can probe thousands of sites simultaneously, each adapting to whatever login flow they encounter.

With 15 billion compromised credentials circulating publicly and 88% of breaches in 2024-2025 using stolen credentials (Verizon DBIR), this automation is not a future concern. It is an active escalation.

Fraud-as-a-service gets smarter

Generative AI is also supercharging the tools that attackers build for each other. AI-generated phishing emails rose 456% between 2024 and 2025. Deepfake-related fraud losses in the US tripled from $360 million to $1.1 billion in a single year. Multi-step fraud attacks, schemes involving several coordinated stages, rose 180% year-over-year in 2025.

Autonomous fraud agents that combine generative AI with automation frameworks are beginning to appear in organized fraud networks. These agents can generate fake IDs on demand, interact with verification interfaces in real time, and learn from failed attempts. Industry projections suggest they could become mainstream within 18 months.

Bots are still the bigger problem

Despite the AI agent headlines, traditional bots remain the dominant threat by every measurable metric.

The numbers

- 51% of internet traffic is automated, with bad bots accounting for roughly 37% of all traffic (Imperva 2024).

- $86-116 billion in estimated annual global losses from bot attacks (Thales 2024).

- 26 billion credential stuffing attempts per month recorded by Akamai.

- 311 million stolen accounts listed across dark web marketplaces in 2025, 63% belonging to retail brands (Kasada).

AI agents, by comparison, account for a fraction of a percent of web traffic. Akamai’s 2025 data showed AI bot traffic up 300% since tracking began, but agentic AI traffic actually declined across most industry verticals since late 2025.

Why bots still win (for attackers)

Bots are cheap, fast, and scalable. An attacker can spin up thousands of bot instances for pennies per hour. Each one executes a narrow task (test this credential, scrape this page, click this ad) at machine speed.

AI agents are expensive. They require significant compute for LLM inference, process slower than purpose-built scripts, and still need human oversight for many tasks. An attacker who wants to test 10,000 credentials is better served by a $50 botnet than a $200/month AI agent subscription.

The dangerous convergence is not agents replacing bots. It is AI making bots smarter. Generative AI helps attackers:

- Write bot code faster. What took days of custom development now takes hours with AI coding assistants.

- Generate realistic fake data. Synthetic identities, fake reviews, and convincing form submissions that pass basic validation.

- Adapt to defenses. AI can analyze why a bot was blocked and suggest modifications to bypass the detection.

- Automate targeting. AI agents identify vulnerable targets, then hand off the actual attack to cheaper, faster bots.

Why traditional defenses fail against both

The defenses most businesses rely on were designed for a simpler threat landscape. They struggle with bots and are even less effective against AI-enhanced attacks.

IP blocking fails because both bots and AI agents route through residential proxies that rotate millions of real household IPs. Blocking one address does nothing when the next request comes from a different city.

CAPTCHAs are bypassed by bots using solving services at $1-3 per 1,000 challenges. AI agents solve them natively because they can see and interact with challenges using computer vision. Neither threat is meaningfully slowed.

User-agent filtering catches nothing. Both bots and AI agents spoof their user-agent strings to mimic standard browsers. Atlas runs as a Chromium-based browser, making it indistinguishable from Chrome at the user-agent level.

Rate limiting helps with brute-force volume but misses distributed attacks. When thousands of bots each send a few requests, or an AI agent patiently probes one login page at human speed, per-IP rate limits never trigger.

Behavioral analysis alone is increasingly insufficient. AI agents are specifically designed to interact with web pages the way humans do. They scroll, pause, click in natural patterns, and navigate multi-page flows. Simple behavioral heuristics cannot reliably separate an AI agent from a human.

How device intelligence detects both threats

The common thread across bots and AI agents is that they run on devices. Every request comes from a machine with specific hardware, a specific browser environment, and specific network characteristics. Device intelligence identifies that machine regardless of what software or agent is driving it.

Identifying the device, not the behavior

Device fingerprinting collects 70+ signals from each visitor: canvas rendering, WebGL output, audio processing, installed fonts, screen properties, GPU characteristics, and more. These signals create a persistent identifier for the physical device.

This matters because:

- Bots running on cloud VMs have fingerprints that reveal their virtual environment. Missing GPU drivers, default font sets, and identical hardware profiles across thousands of “unique visitors” expose botnet infrastructure.

- AI agents on known platforms (like Atlas running from OpenAI’s infrastructure) can be identified by their datacenter signatures, browser configuration patterns, and environment characteristics.

- AI agents on user devices (like Anthropic Computer Use) run through the user’s actual browser. The device fingerprint matches the user’s real hardware, helping distinguish a legitimate user’s agent from a malicious one operating from a fraud farm.

Layering detection signals

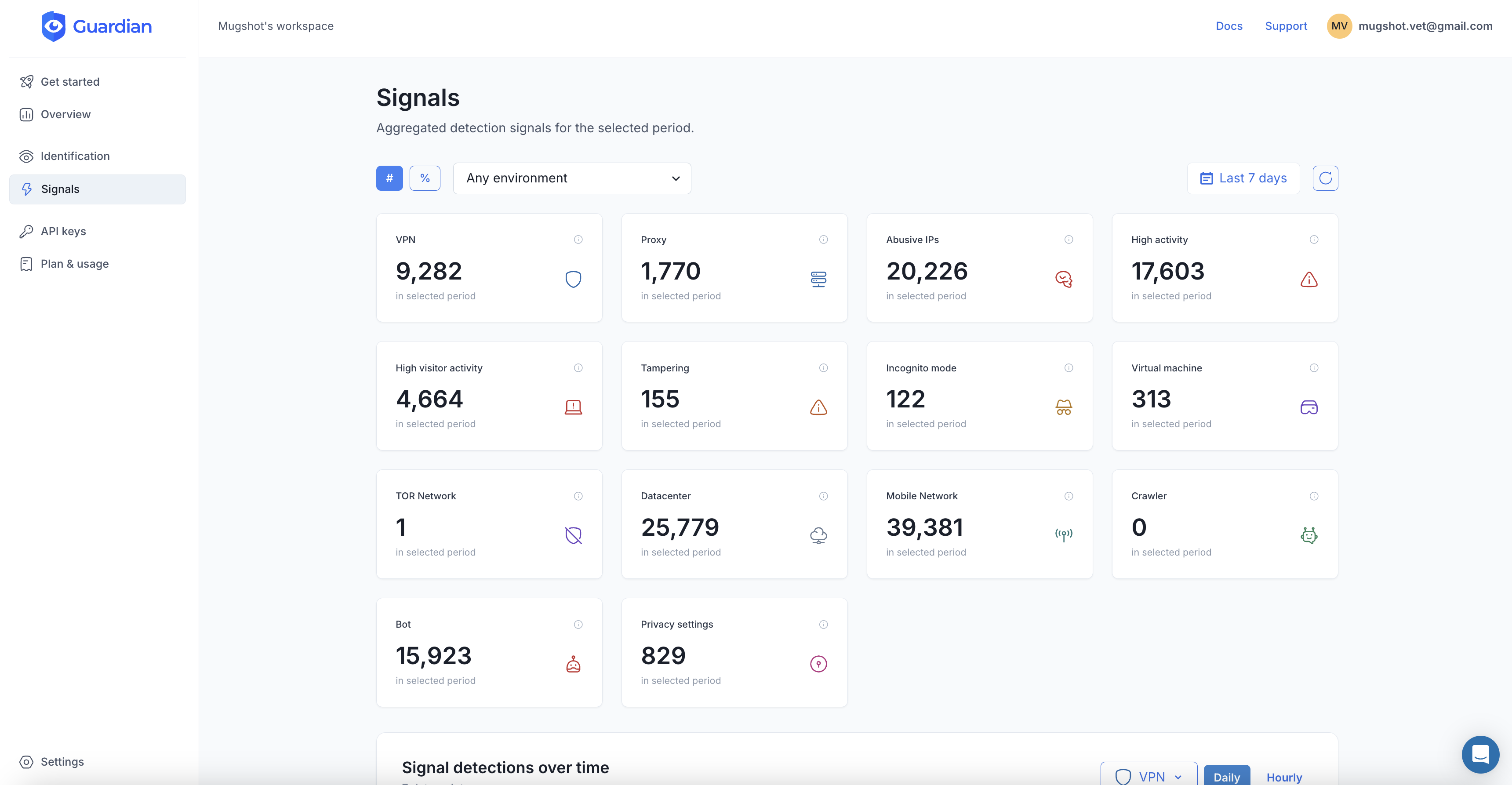

Guardian combines device fingerprinting with real-time detection signals that catch both bot and agent-based threats:

import { loadAgent } from "@guardianstack/guardian-js";

const guardian = await loadAgent({ siteKey: "YOUR_SITE_KEY" });

const { requestId } = await guardian.get();On your server, check the full analysis:

import { createGuardianClient, isBot, isVPN }

from "@guardianstack/guardianjs-server";

const client = createGuardianClient({

secret: process.env.GUARDIAN_SECRET_KEY,

});

const event = await client.getEvent(requestId);The response surfaces signals relevant to both threat types:

- Bot detection identifies headless browsers, automation frameworks (Selenium, Playwright, Puppeteer), and browser environments that have been modified to run automated scripts. This catches the vast majority of bot traffic.

- VPN and proxy detection flags traffic routing through datacenter IPs, residential proxies, and Tor exit nodes. Both AI agents operating from cloud infrastructure and bots using proxy rotation are exposed.

- Browser tampering detection catches anti-detect browsers and modified browser environments. When an agent or bot spoofs its canvas output, WebGL renderer, or font list, the inconsistencies between reported and actual values are detected.

- Velocity signals track request volume, IP diversity, and account activity per device over 5-minute, 1-hour, and 24-hour windows. A device that logs into 50 different accounts in an hour is flagged whether those logins are driven by a bot script or an AI agent.

- Persistent visitor identification links sessions from the same device across cookie clears, incognito mode, and VPN changes. When an attacker reuses devices across campaigns, the connection is visible.

Making risk decisions, not binary blocks

The right response to AI agent traffic is not always blocking. Many agents will act on behalf of legitimate customers. A shopper asking ChatGPT Atlas to compare prices across stores is a real potential customer.

Device intelligence enables nuanced policies:

- Allow agent traffic from clean devices with no bot signals, no VPN, and normal velocity.

- Challenge agent traffic from datacenter IPs or devices with elevated velocity.

- Block traffic that combines bot automation signals with proxy usage and browser tampering.

This approach protects your business without frustrating the growing number of users who interact with the web through AI assistants.

Preparing for what comes next

The AI agent landscape is evolving fast. Here is what businesses should do now:

1. Prioritize bot defense. Bots are the proven, measured, billion-dollar threat today. Get device intelligence in place to catch automated traffic, credential stuffing, and scraping before worrying about edge cases from AI agents.

2. Classify, do not just block. Build the ability to identify and categorize traffic by source: human, known bot, AI agent, suspicious automation. This gives you the flexibility to set different policies for different traffic types as the landscape shifts.

3. Watch for credential automation. AI-powered credential attacks are the most immediate escalation. Monitor login flows for devices testing multiple accounts, requests from datacenter IPs, and velocity spikes that signal automated probing.

4. Plan for agent-optimized authentication. As AI agents become more common, authentication flows will need to accommodate them. Device intelligence provides a foundation: verify the device is legitimate, then let the agent proceed. This is more reliable than CAPTCHAs, which agents can solve, or IP blocks, which catch legitimate agent platforms.

5. Update your threat model quarterly. The capabilities of AI agents are changing every few months. What is expensive and inefficient for attackers today may be cheap and effective in six months. Regular threat model reviews keep your defenses aligned with the actual risk landscape.

The future of web security is not about choosing between bot defense and AI agent defense. It is about identifying every device that touches your systems and making intelligent decisions based on what you find. That starts with device intelligence.

Frequently asked questions

What is an AI agent?

Can AI agents commit fraud?

What is prompt injection and why does it matter?

Are AI agents or bots the bigger threat right now?

How can businesses detect AI agent traffic?

Should businesses block AI agents?

Related articles

· 9 min read

7 Best reCAPTCHA Alternatives for Bot Prevention (2026)

reCAPTCHA is losing ground to privacy-first, invisible alternatives. Compare hCaptcha, Turnstile, Guardian, and more to find the right fit.

· 16 min read

Bot Detection: How to Block Bad Bots in 2026

Bot detection identifies automated traffic hitting your site. Learn how bots work, what damage they cause, and proven techniques to stop them.

· 8 min read

How to Stop Brute Force Attacks on Your Login Pages

Brute force attacks exploit weak passwords and stolen credentials at scale. Learn layered prevention techniques that actually work in 2026.