Deepfakes vs. Gambling KYC: Why Identity Checks Are Failing

Domenico Lorenti

Cloud Architect

In Q1 2025, Sumsub’s identity verification systems flagged a 700% increase in deepfake fraud compared to Q1 2024. In the same period, synthetic identity document fraud rose 195%. A deepfake video that could bypass a selfie-based liveness check cost as little as $200 on dark web marketplaces. Meanwhile, the humans reviewing these checks correctly identified high-quality deepfakes only 24.5% of the time.

These numbers represent a fundamental shift in the fraud landscape for online gambling. KYC verification, the compliance layer that every regulated operator relies on to confirm player identity, is being systematically defeated by AI-generated faces, documents, and identities. The question is no longer whether deepfakes can bypass gambling KYC. They already are. The question is what comes next.

The deepfake threat to gambling KYC

KYC in online gambling typically involves three steps: document upload (passport, driver’s license, or national ID), selfie capture or liveness check (to match the document photo to the real person), and address verification. Deepfakes target the second step, and synthetic documents target the first.

How deepfakes defeat liveness checks

Modern deepfake tools generate synthetic video in real time. The fraudster uploads a stolen identity document, then uses deepfake software to generate a face that matches the document photo. During the liveness check, the deepfake can blink, turn its head, smile on command, and respond to random prompts designed to catch static images.

The technology is accessible and cheap. A basic cloud-based face-swap tool costs approximately $20 for five hours of usage. A high-quality deepfake video runs $200-$20,000 depending on sophistication. For a fraud operation creating hundreds of accounts, the per-account cost of deepfake KYC bypass is negligible compared to the potential return from bonus abuse or money laundering.

The detection gap is the real problem. AI deepfake detection models achieve high accuracy in controlled lab environments, but that accuracy drops 45-50% in real-world conditions. Variable lighting, camera quality, compression artifacts, and network bandwidth all degrade detection performance. Deepfake technology also improves continuously, and detection models trained on older generation fakes are less effective against newer techniques.

Synthetic identities: the document problem

Deepfakes solve the selfie problem. Synthetic identities solve the document problem.

A synthetic identity combines real data fragments with fabricated details. The Social Security number belongs to a deceased person or a child. The address is a real address pulled from public records. The name and date of birth are fabricated. An AI-generated headshot completes the profile. The resulting identity does not belong to any real person but has enough legitimate data points to pass document verification.

Sumsub’s 2025 annual report found that synthetic identities were used in 21% of first-party fraud cases. AI-generated document forgery, a new category that barely registered in 2024, now accounts for 2% of all document fraud and is rising rapidly. Synthetic identity fraud drives up to 80% of all new account fraud globally, according to CoinLaw research.

For gambling operators, synthetic identities are particularly dangerous because they are not stolen from a real person. There is no victim to report the fraud, no credit alert to trigger, and no identity owner to dispute the account. The synthetic identity exists purely to commit fraud and then disappear.

The agentic AI escalation

The emerging threat is not individual deepfakes. It is autonomous AI systems that execute entire fraud workflows. Sumsub’s 2025 annual report documented the rise of “agentic AI fraud”: autonomous systems that generate fake identities, interact with verification interfaces in real time, and learn from failed attempts to improve future success.

These systems can create a synthetic identity, generate matching documents and a deepfake face, complete a KYC process, create a gambling account, claim a welcome bonus, meet wagering requirements through automated betting, and initiate a withdrawal, all with minimal human intervention. The share of multi-step attacks rose from 10% to 28% of all identity fraud between 2024 and 2025, indicating that automated, sequenced fraud is becoming the norm.

Why KYC alone cannot protect gambling operators

The data is clear: KYC is a compliance requirement, not a fraud prevention tool in its current form.

The post-KYC fraud problem

Sumsub reports that 76% of fraud attempts in gambling occur after the KYC process is complete. This means the majority of fraud happens during day-to-day account activity: deposits, bonus claims, betting, and withdrawals. KYC creates a single checkpoint at account creation. Everything after that checkpoint is unmonitored by the identity verification layer.

A fraudster who passes KYC with a synthetic identity has a fully verified, “legitimate” account. They can claim bonuses, deposit funds, place bets, and withdraw money. Nothing in the KYC system flags their ongoing activity because, as far as the system knows, they are a verified customer.

The multi-identity blind spot

KYC verifies that Account A belongs to Identity A. It does not verify that Identity A, Identity B, Identity C, and Identity D are all controlled by the same person. Each multi-accounting identity passes verification independently. KYC has no mechanism to link them.

This is the core limitation. A fraudster with 50 synthetic identities that each pass KYC has 50 verified accounts. Each one claims a welcome bonus. Each one appears to be a unique new customer. KYC not only fails to prevent the fraud, it actively legitimizes it.

The verification arms race

Every improvement in KYC verification is met with an improvement in bypass technology. Platforms add liveness checks; fraudsters deploy real-time deepfakes. Platforms add document analysis; fraudsters use AI to generate convincing forgeries. Platforms add address verification; fraudsters use real addresses from public records.

Gartner predicts that by 2026, 30% of enterprises will no longer consider standalone identity verification reliable in isolation. This is not a prediction about the distant future. It is a statement about the current state of the technology.

The regulatory pressure

Regulators are responding to the deepfake threat, but their tools are primarily focused on tightening KYC requirements rather than moving beyond them.

UKGC enforcement

The UKGC launched a new financial penalties framework in October 2025. The most serious breaches carry fines of up to 15% of gross gambling yield. Platinum Gaming received a GBP 10 million fine for AML and responsible gambling failures in the same month. The UKGC requires operators to verify identity before any gambling activity, but the framework assumes that identity verification works. As deepfakes and synthetic identities undermine that assumption, operators face a compliance gap.

MGA tightening

The Malta Gaming Authority rejected over 70% of licence applications in the first half of 2025 and imposed EUR 139,360 in penalties across 23 administrative actions. The MGA has shifted to a risk-based, evidence-driven regulatory model, and operators must demonstrate that their fraud prevention measures go beyond basic KYC compliance.

Curacao gaming reform

Curacao completed its gaming reform in 2025, replacing the old sub-licensing system with a single Curacao Gaming Authority. From January 2026, all licensees must implement robust KYC and AML measures, maintain physical offices in Curacao, and demonstrate adequate player protection systems. Even historically relaxed jurisdictions are raising the bar.

The compliance gap

The uncomfortable reality is that regulators mandate KYC as a fraud prevention tool, but KYC is being systematically defeated by AI. Operators who rely solely on KYC comply with the letter of the regulation but not the spirit. Forward-thinking regulators are beginning to recognize that device-level verification is a necessary complement to identity verification, but formal requirements have not caught up.

Device intelligence: the layer deepfakes cannot defeat

Deepfakes target the relationship between a face and an identity. Device intelligence targets something entirely different: the physical machine behind the screen. A fraudster can generate a convincing synthetic face. They cannot change the hardware fingerprint of the laptop or phone they use to present it.

How device fingerprinting works alongside KYC

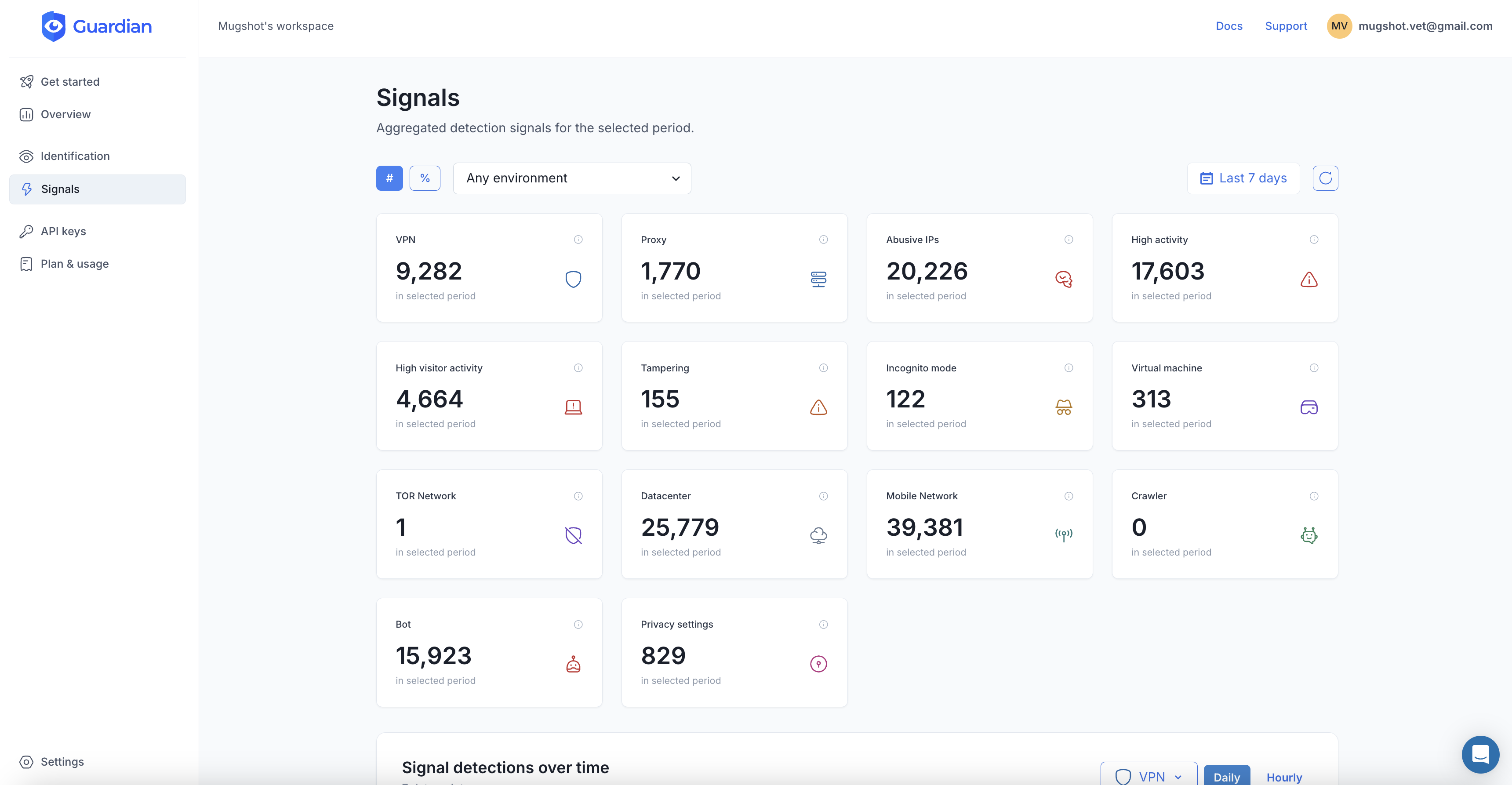

Device fingerprinting collects 70+ signals from each visitor’s hardware and browser: GPU renderer, canvas rendering output, audio processing characteristics, WebGL data, screen properties, installed fonts, and more. These signals create a persistent identifier for the physical device that survives cookie clears, incognito mode, and browser changes.

When a fraudster passes KYC with five different synthetic identities using the same device and browser, all five verifications produce the same fingerprint. KYC sees five unique people. Device intelligence sees one machine. Even if the fraudster switches browsers between submissions, storing all historical fingerprints per account lets the platform cluster identifiers that share hardware signals. The combination of identity verification plus device verification is far more powerful than either layer alone.

Catching post-KYC fraud

Because device fingerprinting operates continuously (not just at registration), it catches fraud patterns that KYC misses entirely. When a verified account suddenly connects from a device linked to 20 other accounts, the risk profile changes dramatically, regardless of how clean the identity verification was.

This addresses the 76% of fraud that occurs post-KYC. Device signals are checked at every interaction: login, deposit, bonus claim, and withdrawal. The device is the constant signal across all these touchpoints.

Detecting browser tampering

Fraudsters who use anti-detect browsers to spoof device fingerprints leave detectable traces. Guardian’s browser tampering detection identifies inconsistencies between claimed and actual hardware characteristics. A browser profile spoofing a MacBook but rendering graphics with Intel integrated GPU drivers on a Windows system reveals the deception.

Even the best anti-detect browsers cannot perfectly replicate the full hardware fingerprint of a genuinely different device. The more signals collected, the harder perfect spoofing becomes.

Implementing device intelligence for gambling KYC

The integration complements existing KYC workflows without replacing them.

At registration (alongside KYC)

// Client-side: collect device signals during the KYC flow

import { loadAgent } from '@guardianstack/guardian-js';

const guardian = await loadAgent({

siteKey: 'YOUR_SITE_KEY',

});

const { requestId } = await guardian.get();

// Send requestId with the KYC submission// Server-side: combine KYC result with device intelligence

import {

createGuardianClient,

isTampering,

isVPN,

} from '@guardianstack/guardianjs-server';

const client = createGuardianClient({

secret: process.env.GUARDIAN_SECRET_KEY,

});

const event = await client.getEvent(requestId);

const { visitorId } = event;

// Check if this device has been used for other KYC verifications

const linkedVerifications = await db.query(

'SELECT account_id, kyc_status FROM verifications WHERE visitor_id = $1',

[visitorId]

);

if (linkedVerifications.length > 0) {

// Same device submitted KYC for multiple identities

await escalateToManualReview(accountId, {

reason: 'device_linked_to_multiple_identities',

linkedAccounts: linkedVerifications,

tamperingDetected: isTampering(event),

vpnDetected: isVPN(event),

});

}At every authentication event

// Check device on every login, deposit, bonus claim, and withdrawal

async function checkDeviceRisk(requestId, accountId, actionType) {

const event = await client.getEvent(requestId);

const { visitorId } = event;

const riskSignals = {

tamperingDetected: isTampering(event),

vpnDetected: isVPN(event),

linkedAccounts: await getLinkedAccounts(visitorId),

velocity: event.velocity,

};

// Device changed since last session

const lastDevice = await getLastDeviceForAccount(accountId);

if (lastDevice && lastDevice !== visitorId) {

riskSignals.deviceChanged = true;

}

return calculateRiskScore(riskSignals, actionType);

}Risk-based decision framework

Device intelligence supports a graduated response rather than binary accept/reject:

- KYC passes, device clean, no linked accounts: Approve automatically. This is a legitimate new player.

- KYC passes, device linked to 1-2 other accounts: Approve but monitor. Could be a shared household device.

- KYC passes, device linked to 5+ accounts: Block and escalate. This is likely a multi-accounting operation.

- KYC passes, browser tampering detected: Block and require manual verification. Anti-detect browsers indicate intent to deceive.

- KYC passes, VPN detected, new device, high velocity: Apply enhanced due diligence. Multiple risk factors in combination indicate elevated fraud risk.

The path forward

The gambling industry is facing a technology inflection point. KYC was designed for a world where identity documents were physical objects and faces could not be synthesized. That world no longer exists.

The solution is not to abandon KYC. Regulatory compliance requires it, and it still catches unsophisticated fraud. The solution is to stop treating KYC as a fraud prevention tool and start treating it as one layer in a multi-layer defense.

Device intelligence provides the layer that AI-powered fraud cannot easily defeat. Faces can be deepfaked. Documents can be forged. IP addresses can be proxied. But the physical device, the laptop, the phone, the tablet behind every session, remains a persistent, verifiable signal.

82.9% of gambling operators say fraud is getting worse. The operators who add device-level verification to their KYC workflows will catch the fraud that deepfakes enable. The operators who do not will continue paying for it.

Start your free trial to add device intelligence to your gambling KYC workflow.

Frequently asked questions

How are deepfakes used to bypass gambling KYC?

How much does deepfake fraud cost the gambling industry?

Can AI detect deepfakes reliably?

What is a synthetic identity in gambling fraud?

Why is KYC not enough to prevent gambling fraud?

How does device fingerprinting complement KYC for gambling fraud prevention?

Related articles

· 13 min read

Online Poker Cheating: How Platforms Detect Collusion (2026)

Online poker faces collusion, ghosting, and multi-accounting at scale. Learn how platforms catch cheaters and how device intelligence helps.

· 12 min read

NIS2 Compliance Checklist: 10 Measures You Need in 2026

A practical NIS2 compliance checklist mapping Article 21's 10 cybersecurity measures to concrete actions. Includes audit preparation and reporting timelines.

· 11 min read

Bonus Abuse in iGaming: How It Works and How to Stop It (2026)

Bonus abuse accounts for 63.8% of iGaming fraud. Learn how fraudsters exploit promotions and how device intelligence detects them before they cash out.